A laboratory balance is a precision instrument used to measure the mass of a sample with a high degree of accuracy and repeatability. The term is used across chemistry, pharmaceutical research, food science, environmental testing, and industrial quality control — any field where the exact quantity of a substance determines whether a result is valid or invalid. Most people use the words “balance” and “scale” interchangeably in everyday speech, and in many commercial and industrial contexts, the distinction barely matters. In a laboratory context, it matters considerably. The difference between a balance and a scale affects the instrument you buy, the environment you place it in, the calibration procedure you follow, and whether your results meet the regulatory requirements of your field. This article explains what a laboratory balance is, how it differs from a scale, what the key specifications mean, and which balance type belongs in which application.

The Technical Difference Between a Balance and a Scale

The distinction between a balance and a scale is grounded in physics, though modern electronics have blurred the line considerably.

A scale measures weight — the force that gravity exerts on an object. Because gravity varies slightly with location on Earth, a scale that reads correctly at sea level in New York will read slightly differently at altitude in Denver without recalibration. Commercial shipping scales, floor scales, and bathroom scales are all scales in this strict sense — they measure the gravitational force acting on a load and convert it to a mass-equivalent number.

A balance measures mass — the intrinsic quantity of matter in an object, independent of gravity. A traditional two-pan balance achieves this by comparing the unknown mass against known reference weights on an equal-arm pivot — gravity acts equally on both sides, so the comparison is gravity-independent. Modern electronic laboratory balances achieve the same result through electromagnetic force compensation, a mechanism that measures how much force is required to keep the weighing pan in equilibrium, then applies a gravity correction calibrated to the instrument’s specific location.

In practice, Sartorius — one of the world’s leading laboratory balance manufacturers — notes that while the terms balance and scale are technically different, they are used interchangeably across most modern laboratory contexts because electronic balances display results in mass units (grams, milligrams, micrograms) after applying the appropriate correction. What matters operationally is not the terminology but the specifications: readability, capacity, repeatability, and linearity.

The Four Key Specifications of a Laboratory Balance

Understanding these four terms is essential before evaluating any laboratory balance.

Readability is the smallest increment the balance can display. An analytical balance with 0.0001 g (0.1 mg) readability displays results to four decimal places in grams. A precision balance with 0.001 g (1 mg) readability displays to three decimal places. As Adam Equipment explains, readability is sometimes called sensitivity — the smallest change in mass that causes the displayed value to change. Readability determines the minimum meaningful quantity your balance can measure and the applications it is suitable for.

Accuracy is how closely the balance’s reading corresponds to the true mass of the sample. A balance can be highly readable but inaccurate — displaying many decimal places that are systematically wrong. Accuracy is assessed through calibration against certified reference weights traceable to NIST (National Institute of Standards and Technology). It is distinct from readability and determined by the quality of the weighing cell, the calibration state of the instrument, and the environmental conditions during measurement.

Repeatability — also called precision — is the ability of the balance to produce the same result when the same mass is placed on the pan under identical conditions multiple times. It is expressed as the standard deviation of repeated measurements. A balance with excellent repeatability produces consistent readings across a series of weighings of the same sample. Poor repeatability means the readings vary — an outcome caused by vibration, air currents, static electricity, or a degraded weighing cell.

Linearity is the accuracy of the balance across its full capacity range, not just at a single point. A balance calibrated at 100 g may read correctly at that weight but diverge from the true value at 50 g or 200 g. Camlab describes linearity as the ability of the balance to follow a linear relationship between the applied mass and the displayed value across the full weighing range. Poor linearity causes systematic errors at weights away from the calibration point.

How a Laboratory Balance Differs From an Industrial or Commercial Scale

The differences are not cosmetic. They reflect genuinely different engineering priorities.

Readability. The finest industrial floor scales read to 0.5 lb or 1 lb. The finest laboratory balances read to 0.000001 g (1 microgram) or finer. That is a difference of approximately six orders of magnitude. Industrial scales are built for large loads and operational speed. Laboratory balances are built for small samples and measurement precision.

Measurement mechanism. Most industrial scales use strain gauge load cells — metal elements that flex under load and convert that flex into an electrical signal. Laboratory balances, particularly analytical and semi-micro models, use electromagnetic force compensation (EMFC) — a fundamentally different technology that generates a counterforce equal to the sample weight and measures that force directly. EMFC produces higher accuracy and better repeatability than strain gauge technology at the readability levels required for laboratory work.

Environmental sensitivity. An industrial floor scale at a shipping dock performs reliably in drafts, temperature swings, and vibration. An analytical balance reading to 0.1 mg is affected by a slight draft from an air conditioning vent, a temperature change of 1–2°C, a nearby centrifuge running, or static electricity on the weighing vessel. Laboratory balances require controlled placement conditions — level, vibration-free surfaces, away from drafts and direct sunlight — that industrial scales do not.

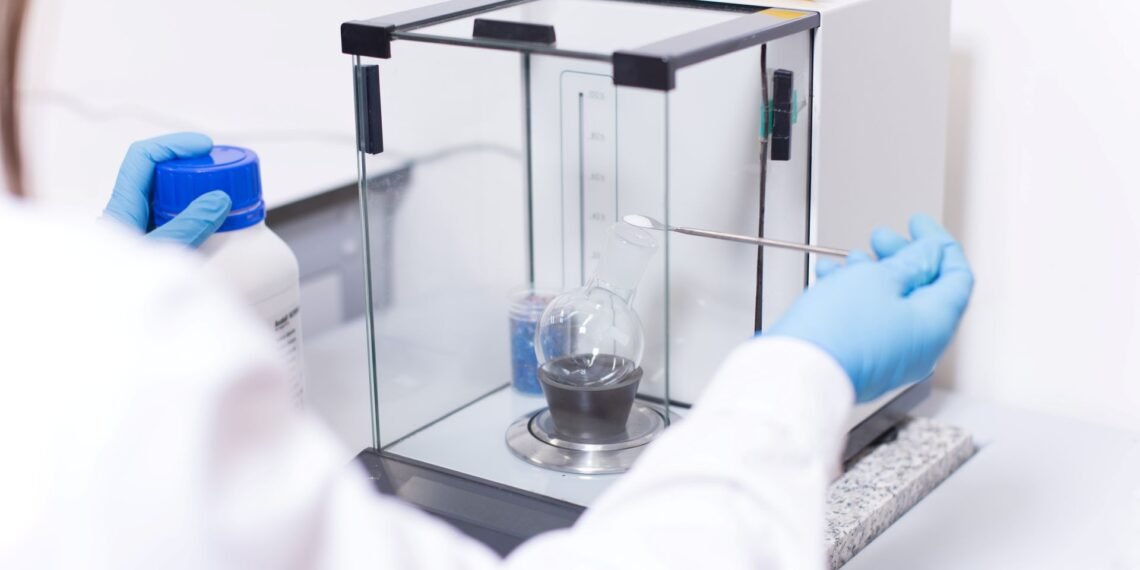

Draft shield. Analytical and semi-micro balances include an enclosed draft shield — a transparent chamber surrounding the weighing pan — to protect measurements from air movement. This component has no equivalent in industrial or commercial weighing. It is the clearest physical indicator that an instrument is a laboratory balance rather than a scale.

Calibration requirements. Industrial scales require periodic calibration by state Weights and Measures authorities for commercial transactions. Laboratory balances require calibration traceable to NIST reference standards, documented in formats that satisfy GLP, GMP, or ISO/IEC 17025 requirements, depending on the regulatory framework of the laboratory. The documentation trail is more rigorous, and in regulated industries such as pharmaceuticals, it forms part of the audit record for every batch produced.

The Four Main Types of Laboratory Balance

The laboratory balance category covers a wide range of instruments. A full treatment of each type appears in our article on types of laboratory balances. In summary, the four main categories are:

Microbalance and ultra-microbalance: Readability of 0.001 mg (1 microgram) or finer. Used for weighing extremely small samples — filter media, particulates, nano-scale materials. Requires vibration isolation and controlled environments. Maximum capacity typically 2–10 g.

Semi-micro balance: Readability of 0.01 mg (10 micrograms). Used in pharmaceutical research, fine chemical analysis, and gravimetric work with small samples. Draft shield standard. Capacity typically 60–220 g.

Analytical balance: Readability of 0.1 mg (0.0001 g). The most common high-precision balance in research and quality control laboratories. Enclosed draft shield standard. Capacity typically 60–520 g. The standard tool for quantitative chemical analysis, reagent preparation, and sample weighing in regulated industries.

Precision balance: Readability of 0.001 g to 0.1 g (1 mg to 100 mg). Used for routine laboratory weighing where sub-milligram precision is not required — solution preparation, bulk material weighing, general QC. Higher capacity than analytical balances, typically up to several kilograms.

Where Laboratory Balances Are Used

Laboratory balances appear in every sector where measurement of small quantities drives a decision or a result.

Pharmaceutical research and manufacturing: Weighing active pharmaceutical ingredients (APIs) and excipients for formulation. Regulatory frameworks, including USP Chapter 41 and FDA GMP requirements, specify minimum balance performance standards for pharmaceutical weighing.

Chemical analysis: Preparing standard solutions, weighing reagents for reactions, and gravimetric analysis of unknowns. The accuracy of the balance directly determines the accuracy of every concentration calculation and analytical result.

Food science and nutrition testing: Weighing samples for moisture analysis, nutritional analysis, and quality control. Both bench-top precision balances and moisture analyzers are standard equipment.

Environmental testing: Weighing filter membranes before and after sample collection to determine particulate mass. Semi-micro and analytical balances are required for PM2.5 and PM10 gravimetric filter analysis.

Academic research: Teaching laboratories use precision balances for routine experiments; research laboratories use analytical and semi-micro balances for published scientific work where mass measurement is part of the experimental record.

Industrial quality control: Weighing components, checking fill weights, verifying formulation batches. Precision balances are standard in production QC environments; analytical balances are used where tighter tolerances apply.

FAQs

What is a laboratory balance used for?

A laboratory balance is used to measure the mass of small samples with high accuracy and repeatability. Applications include weighing reagents and pharmaceutical ingredients, preparing standard solutions, gravimetric analysis, moisture testing, and quality control in pharmaceutical, chemical, food, and research environments.

What is the difference between a balance and a scale?

Technically, a scale measures weight — the force of gravity on an object — while a balance measures mass, which is gravity-independent. In modern electronic instruments, the distinction is less sharp because electronic balances apply gravity corrections and display results in mass units. The practical difference lies in readability, measurement mechanism, and environmental sensitivity — laboratory balances read far smaller quantities and require more controlled operating conditions than commercial or industrial scales.

What is readability in a laboratory balance?

Readability is the smallest increment the balance can display. An analytical balance with 0.0001 g readability can display changes as small as one ten-thousandth of a gram. It is the primary specification used to match a balance to an application — the readability must be fine enough to detect the smallest mass difference that matters in the measurement being performed.

What is the most common type of laboratory balance?

The analytical balance — with 0.1 mg (0.0001 g) readability and a capacity of typically 60–520 g — is the most widely used high-precision balance in research, pharmaceutical, and quality control laboratories. It balances the precision needed for most scientific weighing applications against a practical capacity range and ease of use.

Do laboratory balances need to be calibrated?

Yes. All laboratory balances require regular calibration against certified reference weights traceable to NIST to maintain accuracy. In regulated laboratory environments — pharmaceutical, food, environmental testing — calibration must be documented, and in ISO/IEC 17025 accredited laboratories, calibration must be performed using accredited procedures with full measurement uncertainty reporting.

Conclusion

A laboratory balance is not simply a more accurate version of a shipping or commercial scale — it is a fundamentally different instrument built around a different measurement principle, engineered for a different environment, and held to a different calibration standard. The critical specifications — readability, accuracy, repeatability, and linearity — define what a balance can measure and what regulatory requirements it can satisfy. Understanding these terms before selecting an instrument saves the expense of choosing the wrong balance for an application and the compliance risk of using an instrument that does not meet the documentation requirements of your field. For the complete breakdown of balance types and their applications, see our guide to types of laboratory balances.